What Is a Hyperscale Data Center?

Ask anyone to name the largest tech companies and most will come up with names like Amazon, Google, Microsoft, and Facebook.

These companies provide services that billions of people worldwide use to shop for goods, look for information, and check up on friends.

So how do these companies make their services available around the world? How do they cope with increasing data processing and storage demands?

The answer is with hyperscale data centers.

More companies are leveraging data to power their processes and deploying Internet of Things (IoT) devices to gather real-time insights.

Backroom servers and even traditional data centers don’t have the infrastructure to handle these demands. As a result, companies are investing heavily in hyperscale data centers.

This article will look at what a hyperscale data center is and the key factors that make up these facilities. We’ll also look at how companies are using hyperscale data centers to power and scale their operations.

What Is a Hyperscale Data Center?

A hyperscale data center is a facility which houses critical compute and network infrastructure.

These facilities allow companies like Amazon, Google, and Microsoft to draw on their processing power to deliver key services to customers worldwide.

There’s no official definition, but a hyperscale facility typically has at least 5,000 servers and is 10,000 square feet or more in size.

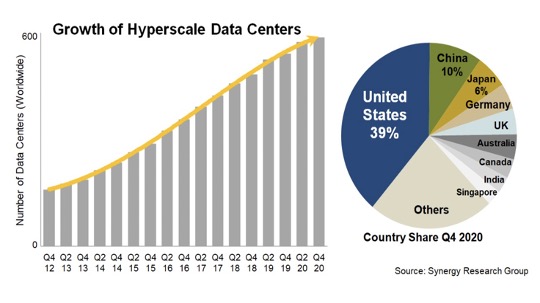

A report from Synergy Research Group found that more than 100 new hyperscale data centers were built in 2020 alone, bringing the total number of these facilities to 597.

(IMAGE SOURCE)

The hyperscale data center market will continue to grow to meet increasing demand for data processing and storage requirements.

The US currently accounts for 39% of these facilities and equals the number of the next eight countries combined — China, Japan, Germany, the UK, Australia, Canada, India, and Singapore.

The leading hyperscale operators are, unsurprisingly, some of the largest tech companies in the world. Amazon, Microsoft, and Google dominate the hyperscale data center market, and operate over half of these facilities.

Another distinguishing factor of a hyperscale facility is its ability to scale — meeting higher workloads by increasing computing resources.

Companies can scale their data centers in two ways:

- Horizontally: Scaling horizontally means adding more machines to your network infrastructure. This allows you to share the processing load across more machines. If a business application can no longer handle additional traffic, adding new servers can handle the extra workload.

- Vertically: Scaling vertically means adding more computing resources like CPU and RAM to your existing infrastructure. This allows you to increase the processing power on a machine without having to change its code. Scaling vertically is easier to implement, but the ability to scale will depend on the machine’s specifications.

When a company builds an on-premise data center, it has to consider its current and future processing needs. But there are several important considerations.

If you “overbuild” a data center, you’ll have idle resources that you aren’t using.

There’s also the risk of equipment becoming obsolete by the time you end up using it. In other words, you’re operating an underutilized asset.

On the other hand, “underbuilding” a data center is equally costly.

Servers that frequently run out of capacity become overloaded and can cause critical applications to stop functioning. This can lead to lost sales and even damage your reputation.

These examples illustrate the importance of scalability.

It’s why companies are turning to cloud computing services by data center operators like Amazon Web Services (AWS) and Microsoft Azure. The hyperscale data centers they operate have an exceptionally agile architecture that can quickly scale to meet demand.

What Are the Key Factors of a Hyperscale Data Center?

Hyperscale data centers aren’t like traditional on-premise data centers. Here’s a look at some of the main elements that make up these massive facilities.

Site Locations

Location is one of the most important considerations for hyperscale data centers, as it affects the quality of service that the facilities can provide.

While placing a hyperscale facility in a rural area may be cheaper, the distance to end users can be enough to create noticeable processing delays. An unreliable power grid can also lead to costly outages.

These aren’t the only considerations. Providers also consider tax structures, access to carriers, and local labor pools when deciding on a location for a hyperscale data center.

Energy Sources

Hyperscale data centers require a lot of energy to operate their servers and power the cooling systems. One data center in Northern Virginia became the first in the world to reach one gigawatt — enough to power about 700,000 homes.

While many of these facilities have a low PUE (Power Usage Effectiveness), the sheer size and power demands mean that many providers build in areas where electricity is cheap.

Others also choose to power their data centers from sustainable sources to improve energy efficiency. For example, the Citadel Campus in Nevada is powered by 100% renewable energy from a combination of solar and wind farms.

Security

Another distinguishing factor between hyperscale data centers and on-premise infrastructure is the lengths they go to secure their facilities and prevent unauthorized access.

Google shared a video showcasing six layers of security in one of its data centers:

- Layer 1: Signage and fencing

- Layer 2: Secure perimeter with guard patrols and cameras

- Layer 3: Restricted building access

- Layer 4: Security Operation Center (SOC)

- Layer 5: Data center floor

- Layer 6: Hard drive destruction

Gaining physical access to such a data center would be practically impossible.

Hyperscale data centers also secure their network to protect against cyberattacks. These measures include configuring firewalls, patching systems, and encrypting all data endpoints.

Automation

Hyperscale data centers contain thousands of servers and other types of hardware like routers, switches, and storage disks. Then there’s support infrastructure like power and cooling systems, uninterruptible power supplies (UPS), and air distribution systems.

Manually monitoring and making adjustments to these systems isn’t feasible on a large scale.

To manage their facilities, hyperscale data centers leverage automation — the process of automating workflows like scheduling, monitoring, and application delivery.

One example is the use of DCIM (data center infrastructure management) software, which monitors, measures, and manages resources across the entire infrastructure.

Other automation examples include:

- Allocating assets because on available power and cooling

- Optimizing workloads across all hardware assets

- Sending alerts when certain thresholds are exceeded

This kind of automation delivers greater agility and maximizes efficiency. Gartner predicts that by 2025, 60% of organizations will use automation tools in their network infrastructure.

What Are the Benefits of a Hyperscale Data Center?

The sheer cost of building and maintaining a hyperscale data center puts it out of reach for most companies. At the same time, a traditional data center may not be robust enough to meet your processing needs.

Moving your company’s processing to a hyperscale data center like Amazon Web Services (AWS) offers benefits like:

- Pay as you go pricing: You only pay for the resources you use and most providers don’t require long-term contracts.

- Increased flexibility: With your own virtual environment, you can select the operating system and programming language you prefer to work with.

- Better scalability: Features like Elastic Load Balancing (ELB) in AWS automatically scale resources up or down based on incoming traffic.

- Reduced downtime: Hyperscale data centers have numerous redundancies built in to ensure minimal downtime to your operations.

- Enhanced security: Robust encryption and multiple layers of security ensure your data is safe.

- Managed IT services: Moving your IT operations to a cloud service can help reduce your costs and free up your staff for higher-value work.

- Compliance features: Compliance tools ensure that your organization meets regulatory guidelines like ISO 27001, HIPAA, GDPR, and more.

Examples of Hyperscale Data Centers

Here’s a look at some of the largest hyperscale data centers in the world. These facilities will continue to play a pivotal role as demand for digital services continues to surge.

Microsoft

Microsoft’s hyperscale data center in Northlake, Illinois is one of the largest data centers in the world. It occupies more than 700,000 square feet and cost an estimated $500 million to build. This data center also has its own on-site electric substation.

(IMAGE SOURCE)

Apple

Apple operates data centers around the world, but one of its largest is based in Maiden, North Carolina. The data center is 500,000 square feet and has a total of 184,000 square feet in raised floor space dedicated to colocation.

As part of its commitment to reach net-zero carbon emissions, Apple has built three solar farms and one 10-megawatt fuel cell installation to power its data center.

(IMAGE SOURCE)

Google has invested $1.2 billion in building its massive hyperscale data center in Lenoir, North Carolina. Exact specifications aren’t available, but the buildings are estimated to total more than 500,000 square feet.

Google chose Lenoir for its combination of “energy infrastructure, developable land, and available workforce.”

(IMAGE SOURCE)

Conclusion

More companies are shifting more of their IT operations to hyperscale facilities. But they’re also finding it challenging to perform accurate capacity planning and maintain centralized records.

The solution is to use DCIM software, a powerful tool that allows you to monitor critical infrastructure, improve capacity planning, and optimize workloads.

Schedule a demo today to see how Nlyte’s DCIM software can help you manage and automate your entire compute infrastructure.